News

Software release: MFEM v4.6

Version 4.6 of MFEM was released on September 27, 2023. Some of the new additions in this release are:

- NURBS meshing and discretization improvements.

- SubMesh support for H(curl) and H(div) transfers.

- TMOP enhancements and mesh optimization miniapps.

- New H(div) matrix-free saddle-point solver.

- Ultraweak DPG formulation for acoustics and Maxwell.

- Stochastic PDE method for Gaussian random fields.

- Obstacle problem and topology optimization examples.

- k-d tree for parallel grid function repartitioning.

- HIP support in the PETSc and SUNDIALS interfaces.

- Improved GPU code debugging.

- and much more!

For more details, see the interactive documentation at http://mfem.org and the full CHANGELOG.

LLNL recaps 7 years of CEED

The DOE launched the ECP in 2016 to mobilize hardware, software, and application integration efforts in preparation for exascale-class supercomputers, which are capable of at least a quintillion calculations per second. In the years since, the DOE has launched the nation’s exascale era with Oak Ridge National Lab’s Frontier system, which will soon be followed by Argonne National Lab’s Aurora and Livermore’s El Capitan. As the ECP’s mandate formally concludes this autumn, so too does CEED’s.

Although the center’s focus on improving high-order discretization algorithms is narrow, its impact is anything but. According to director Tzanio Kolev, CEED has pushed the boundaries of what’s possible with high-order methods. He states, “We’ve proven the value and benefits of these methods. The software we’ve developed advances research in the high-order ecosystem including meshing, discretization, solvers, and GPU portability.” Visit LLNL's Computing website to read a new article summarizing CEED's goals and accomplishments.

CEED annual meeting 2023

CEED held its seventh and final annual meeting August 1-3, 2023 in a hybrid format: in-person at Lawrence Livermore National Laboratory's University of California Livermore Collaboration Center and virtually using ECP Zoom for videoconferencing and Slack for side discussions.

The goal of the meeting was to report on the progress in the center, deepen existing and establish new connections with ECP hardware vendors, ECP software technologies projects and other collaborators, plan project activities and brainstorm/work as a group to make technical progress.

In addition to gathering together many of the CEED researchers, the meeting included representatives of the ECP management, hardware vendors, software technology and other interested projects.

See the meeting page for additional information. Read a recap ofthe meeting on LLNL's Computing website.

Software release: MFEM v4.5.2

Version 4.5.2 of MFEM was released on March 23, 2023. Some of the new additions in this release are:

- Support for pyramids in non-conforming meshes.

- Removed the support for the Mesquite toolkit. Use MFEM's TMOP functionality instead.

- New fast normalization-based distance solver.

- Option to auto-balance compound TMOP metrics.

- Support for shared Windows builds with MSVC through CMake.

For more details, see the interactive documentation at https://mfem.org and the full CHANGELOG.

Software release: MFEM v4.5

Version 4.5 of MFEM was released on October 22, 2022. Some of the new additions in this release are:

- MFEM Docker container and cloud support.

- New and improved integrations with Enzyme, Algoim, ParMoonolith, hypre, SuperLU, SUNDIALS, PETSc and libCEED.

- Efficient GPU kernels for LOR matrix assembly and DG mass operator inversion.

- Full assembly and device support for LinearForm integrators.

- Partial assembly and matrix-free operators on mixed meshes through libCEED.

- New miniapp, Hooke, showcasing automatic differentiation for nonlinear elasticity.

- Improved mesh optimization and physical space interpolation.

- Support for submesh extraction.

- New fractional PDE example.

For more details, see the interactive documentation at https://mfem.org and the full CHANGELOG.

CEED annual meeting 2022

CEED held its sixth annual meeting August 9-11, 2022 in a hybrid format: in-person at the Siebel Center for Computer Science on the UIUC campus in Urbana and virtually using ECP Zoom for videoconferencing and Slack for side discussions.

The goal of the meeting was to report on the progress in the center, deepen existing and establish new connections with ECP hardware vendors, ECP software technologies projects and other collaborators, plan project activities and brainstorm/work as a group to make technical progress.

In addition to gathering together many of the CEED researchers, the meeting included representatives of the ECP management, hardware vendors, software technology and other interested projects.

See the meeting page for additional information.

Software release: libCEED v0.10.0

Version 0.10 of libCEED was released on March 21, 2022. Some of the new additions in this release are:

- Support for single precision

- Capability to assemble operators on GPUs

- Performance enhancements

- Various interface and error checking improvements

- Mini-app improvements

For more details, see the documentation and the full release notes.

Software release: MFEM v4.4

Version 4.4 of MFEM was released on March 21, 2022. Some of the new additions in this release are:

- Support for AMG solvers on AMD GPUs.

- New hr-adaptivity and interface fitting of high-order meshes.

- High order Nedelec elements on tetrahedral meshes without reordering.

- GPU-enabled partial and element assembly for DG on AMR meshes.

- Initial support for meshes with pyramidal elements.

- Arbitrary order Nedelec and Raviart-Thomas elements on prisms.

- New and improved integrations with libCEED, CoDiPack, ParELAG.

- Documentation for all releases at https://docs.mfem.org.

- 9 new examples and miniapps, including AD and Jupyter examples.

For more details, see the interactive documentation at https://mfem.org and the full CHANGELOG.

Software release: FMS v0.2

Version 0.2 of FMS was released on September 10th, 2021. Some of the new additions in this release are:

- Lightweight API to represent general finite element meshes + fields

- I/O in ASCII and Conduit binary format

- FMS visualization support in VisIt v3.2

- Common, easy to use framework

For more details, see the CHANGELOG.

CEED annual meeting 2021

CEED held its fifth annual meeting August 3-4, 2021 virtually using ECP Zoom for videoconferencing and Slack for side discussions.

The goal of the meeting was to report on the progress in the center, deepen existing and establish new connections with ECP hardware vendors, ECP software technologies projects and other collaborators, plan project activities and brainstorm/work as a group to make technical progress.

In addition to gathering together many of the CEED researchers, the meeting included representatives of the ECP management, hardware vendors, software technology and other interested projects.

See the meeting page for additional information.

Software release: MFEM v4.3

Version 4.3 of MFEM was released on July 29, 2021. Some of the new additions in this release are:

- Variable order spaces, serial p- and hp-refinement.

- Low order refined discretizations, solvers and transfer.

- Preconditioners for advection-dominated systems.

- Support for GPU solvers from hypre and PETSc.

- GPU-accelerated mesh optimization algorithms.

- Explicit vectorization for Fujitsu's A64FX ARM microprocessor.

- Support for high-order Lagrange meshes in VTK format.

- New and improved integrations with FMS, Caliper, libCEED, Ginkgo.

- 11 new examples and miniapps.

For more details, see the interactive documentation at https://mfem.org and the full CHANGELOG.

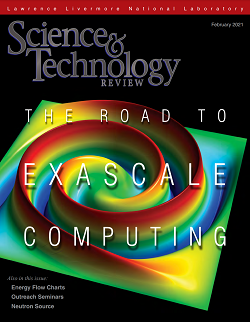

CEED and ECP featured in S&TR cover story

The latest issue of LLNL's Science & Technology Review magazine showcases CEED work in the cover story. The cover art shows an advection simulation powered by MFEM and GLVis.

- Full issue (and PDF version)

- Commentary: To Exascale and Beyond by LLNL Computing associate director Bruce Hendrickson

- Cover story: The Exascale Software Portfolio featuring ECP deputy director Lori Diachin and CEED director Tzanio Kolev, among others

Abstract: As a leader in high-performance computing, LLNL wields a large portion of the DOE's HPC resources to advance national security and foundational science. The Sierra supercomputer supports the National Nuclear Security Administration's Stockpile Stewardship Program by enabling more accurate, more predictive simulations. This generation of computers is known as heterogeneous, or hybrid, because their architectures combine graphics processing units and central processing units to achieve peak performance well above 100 petaflops. (A petaflop is 10^15 floating-point operations per second.) The next generation's processing capability—at least an exaflop (10^18 flops)—will be many times greater. HPC software must adjust to these new hardware standards. As the exascale era begins, two major initiatives leverage and expand Livermore's HPC capabilities, with a spotlight here on software. The Exascale Computing Project, a joint effort between the DOE Office of Science and NNSA, brings together several national laboratories to address many hardware, software, and application challenges inherent in the organizations' scientific and national security missions. Within the Laboratory, the RADIUSS project aims to benefit scientific applications through a robust software infrastructure.

ECP annual meeting video highlights

The ECP's annual meeting was held virtually this year on April 12-16. Several sessions are available in a YouTube playlist. The Characterizing Performance Improvements in the Center for Efficient Exascale Discretizations session featured speakers from ECP Application Development areas—including Tzanio Kolev for CEED—to discuss how they set figures of merit, determined key performance parameters, and calculated efficiency of codes. The video concludes with a panel discussion of the speakers. Runtime is 1:00:04; the CEED section begins at 25:05.

Software release: MFEM v4.2

Version 4.2 of MFEM was released on October 30, 2020. Some of the new additions in this release are:

- Extended partial assembly algorithms and device support, including specific HIP and CUDA improvements.

- AMG preconditioning on GPUs via NVIDIA's AmgX.

- Element-level and full sparse matrix assembly on GPUs.

- Support for explicit vectorization on Intel and IBM platforms.

- Improved mesh optimization and discretization algorithms.

- Support for matrix-free geometric h- and p-multigrid.

- New integrations with CVODES, MKL CPardiso, SLEPc and ADIOS2.

- libCEED, GSLIB-FindPoints, KINSOL, Gmsh, Gecko and ParaView improvements.

- 18 new examples and miniapps.

For more details, see the interactive documentation at https://mfem.org and the full CHANGELOG.

Software release: libCEED v0.7

Version 0.7 of libCEED was released on September 29, 2020. The release includes

- New HIP backend

- Revamped OCCA backend

- Restriction by offsets instead of blocked indices

- Improved examples

- Improved solver ingredients

- Numerous bug fixes

- and more...

MFEM R&D100 finalist

The MFEM finite element library was selected as a finalist for this year's R&D100 awards in the Software/Services category for its development of advanced discretization algorithms for HPC applications.

CEED annual meeting 2020

CEED held its fourth annual meeting August 11-12, 2020 virtually using ECP Zoom for videoconferencing and Slack for side discussions.

The goal of the meeting was to report on the progress in the center, deepen existing and establish new connections with ECP hardware vendors, ECP software technologies projects and other collaborators, plan project activities and brainstorm/work as a group to make technical progress.

In addition to gathering together many of the CEED researchers, the meeting included representatives of the ECP management, hardware vendors, software technology and other interested projects.

See the meeting page for additional information.

MFEM video posted to YouTube

A new video titled "MFEM: Advanced Simulation Algorithms for HPC Applications" is available on YouTube. Team members Tzanio Kolev, Veselin Dobrev, Will Pazner, and Jean-Sylvain Camier describe how MFEM benefits scientific applications, the advantages of MFEM's advanced mathematical algorithms, and how it supports multiple hardware configurations (such as GPU-based architectures). Computational physicist Rob Rieben also discusses how he uses MFEM in inertial confinement fusion simulations.

The video runs 7:06 and includes both closed captioning and a transcript.

Software release: CEED v3.0

The CEED team released CEED 3.0 consisting of 12 integrated Spack packages for libCEED, mfem, nek5000, nekcem, laghos, nekbone, hpgmg, occa, magma, gslib, petsc and pumi plus a new CEED meta-package.

With Spack, a user can install the whole CEED software stack simply with: spack install ceed.

Visit the CEED 3.0 release page for further information about the release and installation help for various systems.

Software release: Remhos v1.0

Initial release of Remhos a new miniapp for monotonic+conservative DG advection-based high-order field interpolation (remap).

Remhos captures the basic structure of the Eulerian phase in many Arbitrary-Lagrangian Eulerian (ALE) simulations, including the BLAST code at Lawrence Livermore National Laboratory.

For more information visit https://github.com/CEED/Remhos.

Software release: libCEED v0.6

Version 0.6 of libCEED was released on March 29, 2020. The release includes

- New documentation and additional examples

- Improved interface, including new Python interface

- Preconditioning ingredients

- Performance improvements in the MAGMA backend

Software release: Laghos v3.0

Version 3.0 of the Laghos miniapp was released on March 27, 2020. The release includes

- Replacement for the Laghos-2.0 custom implementations in the

cuda/,raja/,occa/andhip/directories with direct general device support in the main Laghos sources based on MFEM-4.1 - With the above change different device backends can be selected at runtime, including cuda, raja, occa, hip, omp and more. See the

-dcommand-line option. - Added

setupmakefile target to download and build the Laghos dependencies: HYPRE (2.11.2), METIS (4.0.3) and MFEM (master branch). - Added

testsandchecksmakefile targets to launch non-regression tests. - Added default dimension options that generate internally the mesh in 1D, 2D and 3D.

- The

timing/directory was deprecated. Use the scripts in the CEED benchmarks instead.

Software release: MFEM v4.1

Version 4.1 of MFEM was released on March 10, 2020. Some of the new additions in this release are:

- A new BSD license

- Improved GPU capabilities including support for HIP, libCEED, Umpire and debugging

- GPU acceleration in additional examples, finite element and linear algebra kernels

- Many meshing, discretization and matrix-free algorithmic improvements

- ParaView, GSLIB-FindPoints, HiOp and Ginkgo support

- 18 new examples and miniapps

- Significantly improved testing

For more details, see the interactive documentation at https://mfem.org and the full CHANGELOG.

Software release: libCEED v0.5

Version 0.5 of libCEED was released in September, 2019. The release includes

- new cuda-gen backend achieving state-of-the-art performance

- single-source QFunctions

- various performance improvements, bug fixes, new examples, and improved tests

Third CEED annual meeting in Virginia Tech

CEED held its third annual meeting August 6-8, 2019 in Virginia Tech.

The goal of the meeting was to report on the progress in the center, deepen existing and establish new connections with ECP hardware vendors, ECP software technologies projects and other collaborators, plan project activities and brainstorm/work as a group to make technical progress.

In addition to gathering together many of the CEED researchers, the meeting included representatives of ECP applications, hardware vendors, software technology and other interested projects.

See the meeting page for additional information.

Software release: MFEM v4.0

Version 4.0 of MFEM was released on May 24, 2019. Some of the new additions in this release are:

- Added initial support for hardware devices, such as GPUs, and programming models, such as CUDA, OCCA, RAJA and OpenMP.

- Added support for fast operator evaluation based on different assembly level representations, e.g. partial assembly.

- Added support for wedge elements and meshes with mixed element types.

- Five new examples and miniapps.

For more details, see the interactive documentation at https://mfem.org and the full CHANGELOG.

Software release: CEED v2.0

The CEED team released CEED 2.0 consisting of 12 integrated Spack packages for libCEED, mfem, nek5000, nekcem, laghos, nekbone, hpgmg, occa, magma, gslib, petsc and pumi plus a new CEED meta-package.

With Spack, a user can install the whole CEED software stack simply with: spack install ceed.

Visit the CEED 2.0 release page for further information about the release and installation help for various systems.

Software release: libCEED v0.4

Version 0.4 of libCEED was released in March, 2019. The release includes

- new CPU and GPU backends

- CPU backend optimizations

- initial support for operator composition

- performance benchmarking

- a Navier-Stokes demo

Laghos CTS-2 benchmark

The CEED team at LLNL developed a version of the Laghos miniapp to be used in the second edition of the Commodity Technology Systems procurement process. These systems leverage industry advances and open source software standards to build, field, and integrate Linux clusters of various sizes into production service.

The CTS-2 version of Laghos features RAJA backend, improved robustness and figure of merit computation, and is available at https://github.com/CEED/Laghos/releases/tag/cts2.

CEED researchers at NAHOMCon19

With the next International Conference on Spectral and High-Order Methods (ICOSAHOM) meetings scheduled for 2020 in Vienna and 2022 in Seoul, it will be at least 10 years between US-based settings of the principal high-order methods conference, ICOSAHOM.

Because of the growing importance of high-order methods, several institutions have joined together to organize the inaugural North American High Order Methods Conference (NAHOMCon19) to be held in San Diego in summer of 2019, that will focus on the many developments in high-order discretizations and applications that are taking place in North America.

Several CEED researchers will participate in the conference, including Misun Min and Tzanio Kolev, who will present two of the plenary talks, and Paul Fischer and Tim Warburton, who are serving on the scientific committee.

Software release: Laghos 2.0

Version 2.0 of the Laghos miniapp was released on November 19, 2018. The release includes

- CUDA, RAJA, OCCA and AMR versions of Laghos in the cuda/, raja/, occa/ and amr/ directories.

- New example: Gresho vortex.

- Added a conservative time integrator (RK2Avg) and computation of total energy.

- Improved the computations of the matrix diagonal by contracting the squares of the B matrices.

- Added diagonal preconditioners for both partial and full assembly.

- Support the Bernstein positive basis When using partial assembly for the velocity.

- Travis CI regression testing on GitHub.

CEED minisymposiums at SIAM CSE19

CEED researchers are organizing two minisymposiums at the SIAM Conference on Computational Science and Engineering (CSE19) in Spokane, Washington, Feb 25 - Mar 1, 2019:

- Exascale Applications with High-Order Methods: Part 1 and Part 2

- Exascale Software for High-order Methods: Part 1 and Part 2

The minisymposiums will focus on high-order applications, discretization algorithms, and lightweight portable libraries targeting performance on exascale hardware.

MFEM part of E4S-0.1

MFEM was included in the first release of the Extreme-Scale Scientific Software Stack (E4S) software collection developed by the Software Technologies focus area of the ECP.

According to the E4S website, the primary purpose of this initial 0.1 release was to demonstrate the release approach based on Spack package maturity.

Future release will include additional software products, with the ultimate goal of including all ECP ST software products.

CEED researchers at ATPESC18

Several CEED researchers presented at the 2018 edition of the Argonne Training Program on Extreme-Scale Computing, which is also part of the Exascale Computing Project.

The CEED presentations covered a wide variety of topics, from meshing, to GPU programming, dense and sparse linear algebra, and high-order discretizations on unstructured meshes.

Videos of all 2018 talks are available on YouTube.

Software release: libCEED 0.3

Version 0.3 of libCEED was released on September 30, 2018. The release includes

- New interface enabling active and passive fields

- Performance improvements and element vectorization

- Streamlined Fortran interface

- Improved testing and coverage

Second CEED annual meeting in CU Boulder

CEED held its second annual meeting August 8-10, 2018 at the University of Colorado Boulder.

The goal of the meeting was to report on the progress in the center, deepen existing and establish new connections with ECP hardware vendors, ECP software technologies projects and other collaborators, plan project activities and brainstorm/work as a group to make technical progress.

In addition to gathering together many of the CEED researchers, the meeting included representatives of ECP applications, hardware vendors, software technology and other interested projects.

See the meeting page for additional information.

Software release: libParanumal v0.1.0

The inaugural version 0.1.0 of libParanumal was released on July 31, 2018. The release includes

- Incompressible flow solver.

- Compressible flow solver.

- Galerkin-Boltzmann kinetic flow solver.

- Discontinuous Galerkin and continuous Galerkin spatial discretizations.

- A range of time steppers custom chosen for each flow solver.

- Support for meshes consisting of triangles, quadrilaterals, tetrahedra, or hexahedra up to at least degree 10.

- OCCA 1.0 based computational kernels.

- MPI based distributed computing

Software release: MAGMA v2.4.0

Version 2.4.0 of MAGMA was released on June 25, 2018. The release includes:

- Performance improvements across many batch routines, including batched TRSM, batched LU, batched LU-nopiv, and batched Cholesky

- Constrained least squares routines (magma_[sdcz]gglse) and dependencies

- Fixed some compilation issues with inf, nan, and nullptr.

For further details and download, see MAGMA Download.

Software release: OCCA v1.0

Version 1.0 of OCCA was released on June 13, 2018. The main focus for the release included:

- Updating the API to expose backend-specific features in a generic way

- New OKL Parser better suited for language transforms and error handling

Useful links:

- v0.2 -> v1.0 porting guide

- libocca.org for more info about OCCA

Software release: MFEM v3.4

Version 3.4 of MFEM was released on May 29, 2018. Some of the new additions in this release are:

- Significantly improved non-conforming unstructured AMR scalability.

- Integration with PUMI, the Parallel Unstructured Mesh Infrastructure from RPI.

- Block nonlinear operators and variable order NURBS.

- Conduit Mesh Blueprint support.

- General "high-order"-to-"low-order refined" field transfer.

- New specialized time integrators (symplectic, generalized-alpha).

- Twelve new examples and miniapps.

For more details, see the interactive documentation and the full CHANGELOG at https://mfem.org.

6th Nek5000 User Meeting held at U Florida

The 6th Nek5000 User/Developer Meeting was held in Tampa, FL, April 17-18, 2018. The event was hosted by the DOE Center for Multiphase Turbulence, which is headed by Prof. S. Balachandar at the University of Florida. Thirty five researchers from industry, national labs, and American, Canadian, and European universities attended the event, which featured twenty three presentations and extensive discussions about current and new trends in Nek5000 development. Next month will mark the 10th anniversary of Nek5000 going open source.

Software release: CEED v1.0

The CEED team released its first software distribution, CEED 1.0 consisting of 12 integrated Spack packages for libCEED, mfem, nek5000, nekcem, laghos, nekbone, hpgmg, occa, magma, gslib, petsc and pumi plus a new CEED meta-package.

With Spack, a user can install the whole CEED software stack simply with: spack install ceed.

As part of CEED 1.0, the team developed comprehensive documentation including software and compiler configurations for ALCF, OLCF, NERSC and LLNL:

| Platform | Architecture | Spack Configuration |

|---|---|---|

| Mac | darwin-x86_64 |

packages |

| Linux (RHEL7) | linux-rhel7-x86_64 |

packages |

| Cori (NERSC) | cray-CNL-haswell |

packages |

| Edison (NERSC) | cray-CNL-ivybridge |

packages |

| Theta (ALCF) | cray-CNL-mic_knl |

packages |

| Titan (OLCF) | cray-CNL-interlagos |

packages |

| CORAL-EA (LLNL) | blueos_3_ppc64le_ib |

packages compilers |

| TOSS3 (LLNL) | toss_3_x86_64_ib |

packages compilers |

For more information visit https://ceed.exascaleproject.org/ceed-1.0.

Software release: libCEED v0.2

Version 0.2 of libCEED, the CEED API library, was released in March 2018 with major improvements in the OCCA backend.

libCEED is a lightweight portable library that allows, for the first time, a wide variety of applications (written in C, C++, Fortran) to share a wide variety of discretization kernels (CPU, GPU, OpenMP, OpenCL), including high-performance GPU kernels.

libCEED comes with several examples of its usage, ranging from standalone C codes in the /examples/ceed directory to examples based on external packages, such as MFEM, PETSc and Nek5000. Below is an illustration how libCEED enables these very different codes (C++, C, F77) to take advantage of a common set of GPU kernels (see also the CEED 1.0 GPU demo):

# libCEED examples on CPU and GPU

cd examples/ceed

make

./ex1 -ceed /cpu/self

./ex1 -ceed /gpu/occa

cd ../..

# MFEM+libCEED examples on CPU and GPU

cd examples/mfem

make

./bp1 -ceed /cpu/self -no-vis

./bp1 -ceed /gpu/occa -no-vis

cd ../..

# PETSc+libCEED examples on CPU and GPU

cd examples/petsc

make

./bp1 -ceed /cpu/self

./bp1 -ceed /gpu/occa

cd ../..

# Nek+libCEED examples on CPU and GPU

cd examples/nek5000

./make-nek-examples.sh

./run-nek-example.sh -ceed /cpu/self -b 3

./run-nek-example.sh -ceed /gpu/occa -b 3

cd ../..

For more information visit https://github.com/CEED/libCEED.

Panayot Vassilevski named SIAM fellow

Panayot Vassilevski, who is part of the CEED team at LLNL, was named a 2018 SIAM fellow for his work on designing algebraic approaches for creating and analyzing multilevel algorithms.

Panayot is the editor-in-chief for Numerical Linear Algebra with Applications and has published many papers and a monograph on Multilevel Block Factorization Preconditioners.

In CEED, Panayot is working on matrix-free preconditioners for high-order finite element discretizations.

Congratulations Panayot!

Benchmark release by Paranumal team

The CEED group at Virginia Tech released standalone implementations of CEED's BK1.0, BK3.0, and BK3.5 benchmark problems. For results and discussion, see the ("CEED Code Competition: VT software release")[https://www.paranumal.com/single-post/2018/02/01/CEED-Code-Competition-bake-off-problems] entry in the Paranumal blog.

Workshop on Batched, Reproducible, and Reduced Precision BLAS

CEED's [UTK team]](magma.md) organized a two-session minisymposium at the SIAM Conference on Parallel Processing and Scientific Computing in Tokyo, Japan from March 7-10, 2018, devoted on Batched BLAS Standardization.

The minisymposium is part of our efforts on standardization and co-design of exascale discretization APIs with application developers, hardware vendors and ECP software technologies projects. The goal is to extend the BLAS standard to include batched BLAS computational patterns/"application motifs" that are essential for representing and implementing tensor contractions.

Besides participation from the CEED project, stakeholders from ORNL, Sandia, NVidia, Intel, IBM, and Universities were invited.

New website launched by the Virginia Tech CEED team

A new website was recently launched by the Parallel Numerical Algorithms (Paranumal) research group at Virginia Tech here. The site includes a blog that gives some practical computing tips related to high performance implementations of finite element methods developed as part of the CEED project here.

Initial release of libCEED: The CEED API library

The initial version of libCEED, the CEED API library, was released in December 2017.

libCEED is a high-order API library, that for the first time provides a common operator description on algebraic level, that allows a wide variety of applications to take advantage of the efficient operator evaluation algorithms in the different CEED packages (from a single source).

Our long-term vision for libCEED is to include a variety of back-end implementations, ranging from simple reference kernels, to highly optimized kernels targeting specific devices (e.g. GPUs) or specific polynomial orders.

For more information visit https://github.com/CEED/libCEED.

Software release: MFEM v3.3.2

Version 3.3.2 of MFEM was released on November 10, 2017. Some of the new additions in this release are:

- Support for high-order mesh optimization based on the target-matrix optimization paradigm from the ETHOS project.

- Implementation of the community policies in xSDK, the Extreme-scale Scientific Software Development Kit.

- Integration with the STRUMPACK parallel sparse direct solver and preconditioner.

- Several new linear interpolators, five new examples and miniapps.

- Various memory, performance, discretization and solver improvements, including physical-to-reference space mapping capabilities.

- Continuous integration testing on Linux, Mac and Windows.

For more details, see the interactive documentation and the full CHANGELOG at https://mfem.org.

CEED participates in xSDK and FASTMath

MFEM joined xSDK, the Extreme-scale Scientific Software Development Kit in ECP's software technologies focus area as of release xSDK-0.3.0, see https://xsdk.info/packages.

MFEM and PUMI are also part of the FASTMath institute in the SciDAC program, see https://scidac5-fastmath.lbl.gov/software-catalog.

Software release: Nek5000 v17.0

Nek5000 version 17.0 was released as a major upgrade to Nek5000. Major features improvements include:

- Refactored build system.

- New user-input parameter file format (

.parreplacing.rea). - Characteristics (large time-step) support for moving mesh problems.

- Moving mesh support for the $PN-PN$ formulation.

- Improved stability for $PN-PN$ with variable viscosity.

- Support for mixed

Helmholtz/CVODEsolves. - New fast

AMG setuptool based on HYPRE. - New

EXODUSIImesh converter. - New interface to

libxsmm(fast MATMUL library). - Extended

lowMachsolver for time varying thermodynamic pressure. - Added DG for scalars.

- Reduced solver initialization time (parallel binary reader for all input files).

- Automatic general mesh-to-mesh transfer for restarts.

- Refactored support for overlapping domains (NekNek).

- Added high-pass filter relaxation (alternative to explicit filter).

- Refactored residual projection including support for coupled Helmholtz solves.

Nekbone and Laghos join proxy app suites

The Nekbone and Laghos miniapps developed in CEED were selected to be part of ECP's initial Proxy Applications Suite.

Both miniapps were also picked to be CORAL-2 benchmarks.

Laghos was also selected as one of LLNL's ASC co-design miniapps.

6th Nek5000 User Meeting to be held at U Florida

The 6th Nek5000 User/Developer Meeting will be hosted by the DOE PSAAP-II Compressible Multiphase Turbulence (CMT) center in Tampa, FL, March 17-18, 2018.

Nek5000 hackathon at UIUC

The inaugural Nek5000 Hackathon was held at NCSA Building, University of Illinois, Urbana-Champaign (UIUC), IL on Nov 12-14, 2017.

The event was attended by researchers and Nek5000 developers to promote the application of Nek5000 to new problems from industry, national laboratories, and academia. Twenty-five participants spent three days working on setting up new examples, developing new features, and helping one another to get maximum performance on their applications. Some of the more prominent exchanges of ideas included standardization of synthetic turbulent inflow techniques, use of CVODE for pure advection-diffusion problems, and the use of the characteristics methods for moving geometry applications.

For more details, see the Nek5000 hackathon website.

CEED organizing minisymposium at ICOSAHOM 2018

CEED is organizing a minisymposium, Efficient High-Order Finite Element Discretizations at Large Scale, at the International Conference on Spectral and High-Order Methods (ICOSAHOM 2018) in London UK, Jul 9-13, 2018.

The goal of the minisymposium is to discuss the next-generation high-order discretization algorithm and software, based on finite/spectral element approaches that will enable a wide range of important scientific applications to run efficiently on future architecture.

Best Paper Award at NURETH-17

CEED researchers (P. Fischer, E. Merzari, A. Obabko) won a Best Paper Award at the 17th International Topical Meeting on Nuclear Reactor Thermal Hydraulics (NURETH-17), held in China in September 2017, with a paper entitled High-Fidelity Simulation of Flow Induced Vibrations in Helical Steam Generators for Small Modular Reactors.

CEED attending Cray, AMD and Intel Deep-Dives

CEED researchers and representatives of the Nek and MFEM teams will attend the October 2017, ECP vendor deep-dive meetings:

- Cray deep-dive in Bloomington, MN on Oct 18-19

- AMD deep-dive in Austin, TX on Oct 24-25

- Intel deep-dive in Hudson, MA on Oct 21-Nov 2

Topics of discussion include advanced technology and memory design, strong scaling considerations and the porting and evaluation of CEED's bake-off problems and miniapps (Nekbone, Laghos, NekCEM CEEDling).

New Nekbench repository

New Nekbench repository has been released to provide scripts that simplify the benchmarking of Nek5000.

The user provides ranges for important parameters ranges (e.g., processor counts and local problem size ranges) and a test type (e.g., scaling or ping-pong test). Nekbench will run the given test in the given parameter space using a Nek5000 case file which is also given by the user (in the ping-pong tests, the case file is optional).

Nekbench is written using bash scripting language and runs any Unix-like operating system that supports bash. It has been successfully tested on Linux laptops/desktops, ALCF Theta, NERSC Cori (KNL and Haswell), and NERSC Edison machines for scaling tests.

Planned extensions for Nekbench include adding more machine types like ANL's Cetus, additional support for the ping-pong test type, and automated plot generation (e.g., scaling study graphs) for each test run.

GPU ports of Nek and Laghos

GPU acceleration is a main focus of the performance optimization efforts in CEED. Recent progress in this direction include GPU ports of CEED's Nek5000 application and the Nekbone and Laghos miniapps.

For Nek5000, an initial GPU-enabled version has been developed based on OpenACC. For Nekbone a pure OpenACC implementation as well as a hybrid OpenACC/CUDA implementation with a CUDA kernel for matrix-vector multiplication has been developed.

For Laghos, a GPU-enabled version has been released using the OCCA interface. With this approach, the user is able to run Laghos distributively using varying device types per MPI process, whether serial C++, OpenMP, or CUDA.

First CEED annual meeting held at LLNL

CEED held its first annual meeting in August, 2017 at the HPC Innovation Center of Lawrence Livermore National Laboratory.

The goal of the meeting was to report on the progress in the center, deepen existing and establish new connections with ECP hardware vendors, ECP software technologies projects and other collaborators, plan project activities and brainstorm/work as a group to make technical progress.

In addition to gathering together many of the CEED researchers, the meeting included representatives of the ECP management, hardware vendors, software technology and other interested projects.

CEED researchers at ATPESC17

Six CEED researchers presented at the 2017 edition of the Argonne Training Program on Extreme-Scale Computing, now part of the Exascale Computing Project.

The CEED presentations covered a wide variety of topics, from overview of Theta, to GPU programming, dense and sparse linear algebra, and high-order discretizations on unstructured meshes.

Videos of all 2017 talks are available on YouTube. CEED researchers have also participated in past editions of the meeting.

CEED BPs and benchmarks repository released

CEED released an initial set of bake-off (BP) problems, which are simple kernels designed to test and compare the performance of high-order codes, both internally in CEED, as well as in the broader high-order community.

In addition to the benchmark descriptions on the CEED BPs page, a benchmarks repository is publicly available with several implementations of the CEED bake-off problems. Currently, MFEM, Nek5000 and deal.ii are included, see directories tests/mfem_bps, tests/nek5000_bps and tests/dealii_bps respectively.

New Laghos and NekCEM CEEDling miniapps released

Two new miniapps developed in CEED were released in June 2017: Laghos and NekCEM CEEDling.

Laghos (LAGrangian High-Order Solver) is a new miniapp developed in CEED that solves the time-dependent Euler equations of compressible gas dynamics in a moving Lagrangian frame using unstructured high-order finite element spatial discretization and explicit high-order time-stepping. In CEED, Laghos serves as a proxy for a sub-component of the MARBL/LLNLApp application.

NekCEM CEEDling is a new NekCEM miniapp, solving the time-domain Maxwell equation for electromagnetic systems.

For more details, see the CEED miniapps page and the Laghos and NekCEM CEEDling repositories on GitHub.

Paper with MPICH at SC17

Joint paper with the MPICH group, Why is MPI so Slow? Analyzing the fundamental limits in implementing MPI-3.1 accepted in Supercomputing 2017. The paper provides an in-depth analysis of the software overheads in the MPI performance-critical path and exposes mandatory performance overheads that are unavoidable based on the MPI-3.1 specification.

STRUMPACK support in MFEM

Support for the sparse direct solver and preconditioner STRUMPACK has been integrated in MFEM.

STRUMPACK is being developed at LBNL and is part of the ECP project Factorization Based Sparse Solvers and Preconditioners (Xiaoye Sherry Li and Pieter Ghysels). The STRUMPACK solver is based on multifrontal sparse Gaussian elimination and uses hierarchically semi-separable matrices to compress fill-in. It can be used as an exact direct solver or as an algebraic, robust and parallel preconditioner for a range of discretized PDE problems.

2017 PETSc User Meeting

Over 75 participants from all over the world attended the PETSc User Meeting, held June 14-16 in Boulder, CO. Hosted by the University of Colorado Boulder, the event consisted of a one-day tutorial on the solver library PETSc and showcased the latest research enabled by the functionality available in PETSc. The meeting agenda covered a total of 15 talks, four posters, and two panels.

Thanks to generous support from Intel and Tech-X, 22 students received travel grants and got to learn about the latest techniques on the large-scale numerical solution of partial differential equations.

PETSc is a suite of data structures and routines for the scalable (parallel) solution of scientific applications modeled by partial differential equations. It has become one of the most widely used numerical software packages of its kind and has users in application areas ranging from acoustics and arterial flow to seismology and semiconductors.

GPU Hackathon at BNL

Nek/CEED team participated the GPU Hackathon 2017 that was held in Brookhaven National Laboratory on June 5-9, 2017. Our team focused on performing and tuning GPU-enabled Nek5000/Nekbone/NekCEM version on large-scale GPU systems for small modular reactor, thermal fluids, and meta-materials modeling.

Workshop on Batched, Reproducible, and Reduced Precision BLAS

The second Workshop on Batched, Reproducible, and Reduced Precision BLAS was held in Atlanta, GA on February 23-25, 2017 including many members of the CEED MAGMA team.

The goal of this workshop was to touch on extending the Basic Linear Algebra Software Library (BLAS). The existing BLAS have proven to be very effective in assisting portable, efficient software for sequential and some of the current class of high-performance computers. New computational needs in many applications have motivated the need to investigate the possibility of extending the currently accepted standards to provide greater parallelism for small size operations, reproducibility, and reduced precision support.

Of particular interest to CEED is the use of batched BLAS for finite element tensor contractions, and thus our team is interested in the establishment of a batched BLAS standard, highly-optimized implementations, and support from vendors on various architectures.

This is the second workshop of an open forum to discuss and formalize details related to batched, reproducible, and reduced precision BLAS. The agenda and the talks from the first workshop can be found here.

Software release: MFEM v3.3

Version 3.3 of MFEM, a lightweight, general, scalable C++ library for finite element methods and a main partner in CEED, was released on January 28, 2017 at https://mfem.org

The goal of MFEM is to enable high-performance scalable finite element discretization research and application development on a wide variety of platforms, ranging from laptops to exascale supercomputers.

It has many features, including:

- 2D and 3D, arbitrary order H1, H(curl), H(div), L2, NURBS elements.

- Parallel version scalable to hundreds of thousands of MPI cores.

- Conforming/nonconforming adaptive mesh refinement (AMR), including anisotropic refinement, derefinement and parallel load balancing.

- Galerkin, mixed, isogeometric, discontinuous Galerkin, hybridized, and DPG discretizations.

- Support for triangular, quadrilateral, tetrahedral and hexahedral elements, including arbitrary order curvilinear meshes.

- Scalable algebraic multigrid, time integrators, and eigensolvers.

- Lightweight interactive OpenGL visualization with the MFEM-based GLVis tool.

Some of the new additions in version 3.3 are:

- Comprehensive support for the linear and nonlinear solvers, preconditioners, time integrators and other features from the PETSc and SUNDIALS suites.

- Linear system interface for action-only linear operators including support for matrix-free preconditioning and low-order-refined spaces.

- General quadrature and nodal finite element basis types.

- Scalable parallel mesh format.

- Thirty six new integrators for common families of operators.

- Sixteen new serial and parallel example codes.

- Support for CMake, on-the-fly compression of file streams, and HDF5-based output following the Conduit mesh blueprint specification.

MFEM is being developed in CASC, LLNL and is freely available under LGPL 2.1. For more details, see the interactive documentation and the full CHANGELOG.

CEED co-design center announced

The Exascale Computing Project (ECP) announced on November 11, 2016 its selection of four co-design centers, including CEED: the Center for Efficient Exascale Discretizations, which is a research partnership between Lawrence Livermore National Laboratory; Argonne National Laboratory; the University of Illinois Urbana-Champaign; Virginia Tech; University of Tennessee, Knoxville; Colorado University, Boulder; and the Rensselaer Polytechnic Institute (RPI).

Additional news coverage can be found in LLNL Newsline and the ANL press release.

R&D 100 Award for NekCEM / Nek5000

NekCEM/Nek5000: Scalable High-Order Simulation Codes received a 2016 R&D 100 Award, given by R&D Magazine to 100 top new technologies for the year.

The R&D 100 citation reads: "NekCEM/Nek5000: Release 4.0: Scalable High-Order Simulation Codes is an open-source simulation-software package that delivers highly accurate solutions for a wide range of scientific applications including electromagnetics, quantum optics, fluid flow, thermal convection, combustion and magnetohydrodynamics. It features state-of-the-art, scalable, high-order algorithms that are fast and efficient on platforms ranging from laptops to the world's fastest computers. The size of the physical phenomena that can be simulated with this package ranges from quantum dots for nanoscale devices to accretion disks surrounding black holes. NekCEM provides simulation capabilities for the analysis of electromagnetic and quantum optical devices, such as particle accelerators and solar cells. Nek5000 provides turbulent flow simulation capabilities for a variety of thermal-fluid problems including nuclear reactors, internal combustion engines, vascular flows, and ocean currents."

See the ANL press release for more information.